Be part of the conversation shaping Australia’s future

18/09/2022

Today Artificial Intelligence (AI) is making decisions that affect many aspects of our everyday lives. AI is indeed pervasive, with partially automated processes now in place across medical triaging, job candidate screening, loan approvals and security camera facial recognition.

It is expected that AI will lead to unprecedented growth in productivity, while also achieving a high degree of personalisation in many daily tasks like shopping, banking, searching for entertainment services and games, along with interacting with government agencies.

When AI first appeared, it was commonly assumed that its decisions, being made by an inanimate computer, would not suffer from human biases. Unfortunately, as AI derives its functionality from data based on past human interactions, it can acquire those same human biases, and even amplify such biases. For these reasons, problems have occurred in relation to facial recognition1, particularly in the area of law enforcement, preferential ridesharing, recruitment and credit assessments. Many AI solutions have indeed been shown to reinforce historic gender or racial biases. Chatbots have learnt to tweet, but as they have ‘machine learned’ from unacceptable content, they have amplified unacceptable content. Such results can seriously and quite rationally erode public trust in AI technology. If left unaddressed, this lack of trust will slow down or halt the adoption of AI and delay the productivity benefits that it can offer. In response to these concerns, research communities, standard bodies, technology providers and policymakers are seriously investing in defining fairness and non-discriminatory principles, technical tools and processes, organisational constructs, and policies and governance procedures, to ensure that AI technologies2 can and are applied within an ethical framework.

IBM first started its AI research journey in 1950, before the technology was even coined as AI. Since then, IBM has consistently advanced the state of AI, punctuated by events such as The Checkers Player3 which was the first ‘machine learnt’ game in 1959, Computer Backgammon followed in 1992, and Deep Blue4 famously beat the reigning World Chess Champion in 1997. In 2012 Watson Jeopardy!5 was crowned the undisputed champion in Jeopardy! and in 2018 Debater6 was the first AI system to debate humans on complex topics in a classic college debate setting.

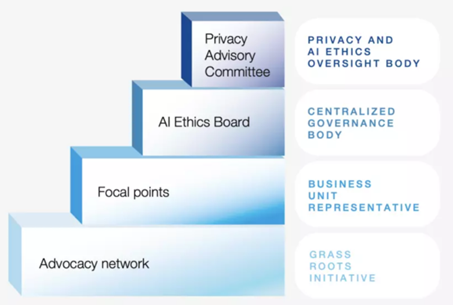

When developing and deploying AI, IBM uses a centralised governance construct comprised of a Privacy Advisory Committee, an AI Ethics Board, numerous and strategically placed Focal Points, and an Advocacy Network (see Figure 1):

- The Privacy Advisory Committee defines the overall vision and oversees the role of the AI Ethics Board. It determines the AI risk appetite, and acts as the final corporate escalation point if and when required.

- The IBM AI Ethics Board sets and maintains the IBM Principles for Trust and Transparency and the Pillars of Ethics (see further in next section), to guide the ethical development and deployment of AI systems by IBM and its clients. It also informs the training of IBM employees and takes responsibility for company-wide education on the ethics of technology. The board is co-chaired by the IBM Chief Privacy Officer, and the IBM Global Leader of AI Ethics. It has diverse representation from the business units and corporate functions, to ensure AI risks are assessed company-wide, that all appropriate decision-making parties are represented and that its edicts reach across the entire company. As an example, IBM’s decision to stop research, development and offerings of general-purpose facial recognition/analysis software came from the AI Ethics Board.7 However, at times the Ethics Board’s scope extends beyond AI to the entire technology portfolio at IBM, which also helped guide IBM’s response to the COVID-19 pandemic.8

- Complementing the Ethics Board, IBM has established Focal Points within each business unit to ensure that all of IBM’s products and services adhere to the Principles for Trust and Transparency. They provide feedback to the Board and bring open issues to the Board’s attention.

- The Advocacy Network focuses on promoting a culture of ethical, responsible and trustworthy technology development and deployment. The Advocacy Network Members showcase grassroots ethics initiatives to the Ethics Board that can be scaled (for example an AI Ethics chatbot initially built for a client was also adapted and adopted at scale for internal IBM use).

The IBM Ethics Board started on this journey by defining some high-level Principles for Trust and Transparency.9 These are as follows:

- The purpose of AI is to augment – not replace – human expertise, judgement and decision-making;

- Data and insights generated from data belong to their creator, not their IT partner;

- All new technologies, like AI, must be transparent, explainable, and free of harmful and inappropriate bias in order for society to trust them.

These principles go beyond merely addressing the development of AI. They also aim to make it more easily understood, and address the critical issue of trust in data gathering, ownership and use. Trust starts with the intent behind the data gathering, and any AI based on the gathered data must respect this original intent. To recognise this, the IBM Ethics Board developed its so-called Pillars of AI Ethics:10 Explainability, Fairness, Robustness, Transparency and Privacy.11

Each of the five pillars of AI Ethics (Explainability, Fairness, Robustness, Transparency and Privacy) is a subject to a separate research initiative within IBM Research, but is also connected and benefits from the work of many other AI research communities.

Figure 1: Governance structure for IBM’s AI efforts12

To further operationalise AI Ethics, to the level of code writing, IBM Research has created five open-source “Trustworthy” AI toolkits which are now widely used across the industry:

- AI Explainability 36013: uses various algorithms to make AI models more comprehensible.

- AI Fairness 36014: provides various fairness metrics and bias mitigation algorithms. These include metrics to assess if certain individuals or groups will receive a fair go in AI implementation.

- Adversarial Robustness 36015: helps developers strengthen their algorithms against adversarial attacks such as poisoning, inference, extraction and evasion.

- AI FactSheets 36016: facilitates understanding and governance of AI models, through simple Factsheet (or collections of facts about an AI model).

- Uncertainty Quantification 36017: uses a set of tools to test the reliability of AI predictions. It characterises boundaries on model confidence (given the available data).

Being open source, these toolkits have attracted a large community of AI contributors, in addition to IBM staff. These toolkits help bridge the gap between research and practice, helping data scientists and data engineers to advance the development and deployment of AI in a responsible manner.

These toolkits can have a real impact in the real world. Recruiting systems that screen candidates have long raised questions and concerns when it comes to fairness, tending to replicate or even exacerbate gender and racial biases of the past that are reflected in data held to date. One major U.S. retailer18 wanted to embed fairness and trust, including the ability to identify bias and explain decisions, within its AI and machine learning model used for hiring. Data science leaders wanted to be able to translate the models’ decisions and results easily — and in a way any hiring manager could easily understand. The company also knew it needed to operationalise AI governance to get more of its business users on board. As a result, the IBM’s Data Science and AI Elite team showed the client how AI models could be managed for accuracy and fairness, and IBM’s Expert Lab services were also called upon to drive the ongoing teamwork needed to reach the corporation’s goals. Now, the company is able to proactively monitor and mitigate bias in its hiring processes.

IBM also uses Trustworthy AI toolkits in HR and recruitment processes internally, based on the underlying principle that AI provides insights and augments human intelligence but does not replace human decisions. AI has been used effectively to prompt recruiters to present a more diverse set of candidates to the hiring managers, and also support managers to make hiring decisions with quantitative measures based on technical skills, cognitive ability or learning agility. Internal validation of post-hire performance data showed these evidence-based assessments resulted in an increase in the proportion of underrepresented minorities among new hires from 18.9% in 2018 to 21.3% in 202019. AI has been also used to support managers with personalised salary increase recommendations (accompanied by explanation and reasoning), where fairness assessments identify and mitigate potential bias in the recommendations. Results demonstrated that attrition was reduced by one-third when managers follow the AI recommendations.

In another example, Regions Bank20 faced the challenge of an advanced analytics practice that too often relied on siloed data sets, development teams working in isolation, and disparate and somewhat inconsistent development methods. Working with IBM, Regions Bank has transformed its advanced analytics using modern tools and new open and transparent methodologies. After creating an analytics Center of Excellence, IBM helped Regions Bank bring data into a centralised environment, applied more machine learning and AI techniques, and, above all, adopt an end-to-end business value approach that includes AI quality control. This quality control provides the Bank with a continuous read on model accuracy, helping them achieve higher confidence in the quality of predictions as they use the technology to reduce risk, detect fraud, and gain consumer insights. The benefits of AI stand to grow exponentially, propelled by leaps forward in the abundance of data and the power of next-generation computing technologies. But only if society trusts it. With trust as the cornerstone of our leadership in AI innovation, IBM seeks to leverage AI in ways that strengthen trust in the technology as a force for positive change.

Read CEDA's report, AI: Principles to Practice here.

[1] Buolamwini, Joy, and Timnit Gebru, “Gender Shades: Intersectional Accuracy Disparities in Commercial Gender Classification”, Proceedings of the 1st Conference on Fairness, Accountability and Transparency, in Proceedings of Machine Learning Research, vol. 81, 2018, pp. 77-91, https://proceedings.mlr.press/v81/buolamwini18a.html

[2] Raji, Inioluwa Deborah, and Joy Buolamwini, “Actionable Auditing: Investigating the Impact of Publicly Naming Biased Performance Results of Commercial AI Products”, AIES, Proceedings of the 2019 AAAI/ACM Conference on AI, Ethics, and Society, January 2019, https://dl.acm.org/doi/10.1145/3306618.3314244

[7] Krishna, Arvind, “IBM CEO’s Letter to Congress on Racial Justice Reform”, IBM THINKPolicy Blog, 8 June 2020, www.ibm.com/blogs/policy/facial-recognition-sunset-racial-justice-reforms

[12] https://www.weforum.org/agenda/2021/09/case-study-on-ibm-ethical-use-of-artificial-intelligence-technology/

[18] https://www.ibm.com/blogs/journey-to-ai/2021/05/building-an-ai-framework-for-fairness-in-hiring-a-u-s-employer-puts-antibias-first/

[19] IBM, IBM 2020 Diversity & Inclusion Report, pp. 80-81, 2021, https://www.ibm.com/impact/be-equal/pdf/IBM_Diversity_Inclusion_Report_2020.pdf